Anonyome Labs strives to support the decentralized identity community and to further enhance interoperability between different networks. Doing this also helps us grow and strengthen our Sudo Platform decentralized identity services. We put privacy at the forefront of our solutions; protecting personal data and individual privacy and upholding international privacy standards are central to our core values. So we were excited to be invited to join the Indicio MainNet as a validator node operator and to set up the ledger’s 10th node.

Here, we go through how we went about standing up our Indico node. In brief:

- The Indicio MainNet is an enterprise-grade ledger for use by decentralized identity applications.

- We brought up the node using AWS Elastic Cloud Computing (EC2) instances within a Virtual Private Cloud (VPC).

- We pulled the validator algorithms from the open-source project, Hyperledger Indy.

- A supporting machine operates the command line interface used to perform steward operations onthe ledger.

- We used security groups at the network interface level to create a firewall.

- We set up monitoring in AWS CloudWatch using a variety of bash scripting in conjunction with Ubuntu and AWS provided utilities.

- We created a regular maintenance schedule.

The Indicio MainNet is an enterprise-grade network for use by decentralized identity applications. It is a distributed ledger which allows decentralized identifiers, verifiable credential schemas and other non-personally identifiable information to be written to it.

MainNet is based on Hyperledger Indy, an open-source project for distributed ledgers. A number of Hyperledger Indy ledgers exist for a variety of purposes. A different provider runs each ledger (e.g. the Sovrinnetwork). All Indy-based ledgers are intended to be interoperable by design, meaning their DIDs, DIDs methods and DID Docs are cross-ledger compatible. Anonyome Labs supports compatibility with different Indy-based ledgers.

As an emerging developer of decentralized identity software, we know relationships with existing ledgers areinvaluable, so when new ledgers are brought up in the decentralized identity space, our team finds opportunities to get involved. In this case, we were proud to be invited to join the Indicio Node Operator Program to run a production level MainNet ledger node.

Some technical requirements must be met to become a validator on the Indicio MainNet. Since Anonyome joined the MainNet directly (i.e., as opposed to progressing through sandboxed test environments first), we made additional security and efficiency decisions to ensure we set up a robust validator node.

The two nodes

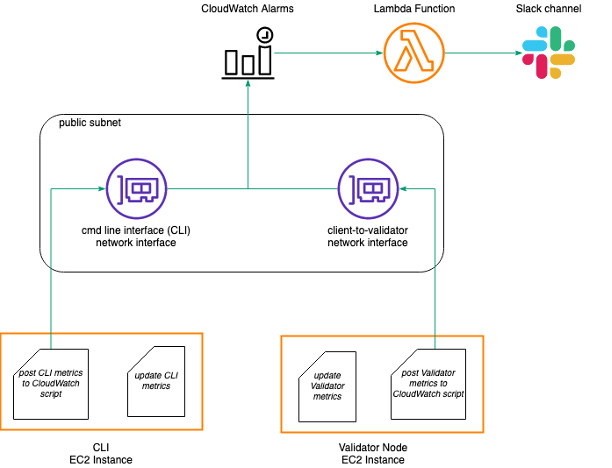

Figure 1 shows the architecture of the validator node and supporting systems. The validator node runs the validator algorithms developed under the open-sourced project Hyperledger Indy. We set up another node to run the command line interface (CLI) used to interact with the ledger. While it was not a requirement for the CLI node to be hosted on an AWS server, it better suited our use case because it kept the node accessible. We have node administrators across Australian and US time zones, so it was desirable to host the CLI on an EC2 instance that all administrators could manage at any time.

Both of these nodes run Ubuntu 16.04 LTS (an upgrade to Ubuntu 20.04 is imminent), with the CLI node as a t2.micro instance and the validator node as a t3.xlarge instance. T2 and T3 are different EC2 instance types offered by Amazon. Each of these instance types offer tiers that differ in CPU, RAM and billing prices. In terms of memory, we allocated the CLI node one disk with 10GB. This is sufficient for the administrative duties carried out on that node. We allocated the validator instance two disks, one with 10GB EBS and the other with 250GB EBS. The 250GB disk is used for the Indy ledger databases. All of these disks are also volume level encrypted, and we allocated an extra 2 CPUs to the validator node to account for the extra level of encryption.Our nodes run in AWS Asia Pacific (Sydney) ap-southeast-2.

The validator node provides capacity to run the validator consensus algorithm which keeps its node database in sync with the network. The CLI node is used for ledger related operations and validator node administration. The separation is for security purposes, because the two tasks require different firewall settings and stored credentials.

Figure 1 also shows two subnets: a public subnet and an inter-validator subnet. Within these two subnets are three network interfaces:

- the CLI interface

- the client-to-validator network interface

- the validator-to-validator network interface.

There is a single network interface attached to the CLI instance. This is called the CLI network interface. It resides within the public subnet to provide an endpoint for communications involving ledger utility. This includes SSH requests from the Anonyome node administrators, access to software repositories, and access to CloudWatch alarms for monitoring.

There are two network interfaces attached to the validator node: the validator-to-validator network interface and the client-to-validator network interface. The validator-to-validator network interface resides within the inter-validator subnet. An AWS elastic IP endpoint within the inter-validator subnet allows for communication with other validator nodes.

The client-to-validator network interface is in the public subnet. This provides a publicly exposed endpoint,used for cloud agent and wallet communications to the validator node.

Security groups as a firewall

The firewall is implemented using AWS security groups that are added to network interfaces. Security groups control inbound and outbound traffic, allowing administrators to limit traffic to certain addresses on specific ports and message protocols. As the security groups are implemented at the network interface level, different interfaces could be assigned a different firewall.

Security groups contain rules that permit or deny certain IP addresses over specific protocols. Such rules target certain types of traffic. For example, there may be a rule that permits SSH requests from certain IP addresses into the nodes. Another permissible rule could be for outbound traffic to the address of AWS CloudWatch to send monitoring updates over the HTTPS protocol. The three network interfaces have differentsets of security groups determined by what types of traffic are permitted and denied for each network interface.

Monitoring the nodes

Monitoring is set up using AWS CloudWatch, Slack hooks and bash scripts. We chose CloudWatch so we could set up alarms on custom metrics. Metrics are values of certain node states that must be monitored, such as any files with permissions that leave them vulnerable to compromise. Anonyome uses a cron job thatperiodically runs a script to monitor certain system states and writes these states to a log file. The same cron job also runs another script that then reads the log file and posts the state of each metric to CloudWatch. If the metric is in a predefined alarmable state, CloudWatch will run a Lambda function that posts a warning message to a dedicated Slack monitoring channel. This is how Anonyome administrators monitor the Indicio nodes. You can see this approach in Figure 2.

Going forward

After joining MainNet, nodes need to be kept up to date with the latest software. Node administrators are responsible for updating security patches every week where necessary. We have set up an AMI management policy to create timely backups of the validator and CLI nodes.

You now can see our node active on MainNet monitoring tools.

Photo By Chan2545