Personal data or personal information is any information that can be used to identify, contact, or locate a specific person, either directly or indirectly. It’s sometimes called personally identifiable information (PII) in technical and legal contexts.

Personal data is a key concept in data protection, privacy law, and cybersecurity, because it includes highly sensitive and personal details about a person which can cause significant harm if exposed.

While formal definitions vary within the United States and internationally, personal data or PII generally include both direct identifiers (like a name or government ID number) and indirect identifiers (like date of birth or location data) that can reveal someone’s identity when combined with other details.

In the United States, the National Institute of Standards and Technology (NIST) defines personally identifiable information (PII) as any information about an individual that can be used to distinguish or trace their identity. This includes obvious identifiers, such as a name, Social Security number, or date of birth, as well as other information that is linked or linkable to a person, such as medical records, education history, or financial details. Linked information is data already connected to an individual’s identity, while linkable information is data that could be connected in the future.

Under the General Data Protection Regulation (GDPR), the European Union uses the term personal data and defines it as any information relating to an identified or identifiable natural person. A person is identifiable if they can be recognized directly or indirectly, such as through a name, identification number, location data, online ID, or factors like physical, genetic, or economic characteristics.

Countries such as Canada, Australia, Japan, and South Korea have national definitions of PII or personal data. While the legal wording may differ, the intent is similar: to protect information that could identify an individual, either on its own or in combination with other data.

Personal data or PII can be divided into sensitive and non-sensitive categories, depending on the potential risk if it’s exposed:

Sensitive PII includes information that could lead to serious harm (such as identity theft, financial loss, or discrimination) if revealed. Examples include government-issued IDs, financial account details, health records, or biometric data.

Non-sensitive PII includes information like gender or postal codes. While relatively harmless on their own, these details can become sensitive when combined with other data.

According to the NIST in the United States, examples of personally identifiable information include:

Full name, maiden name, or alias

Identification numbers (Social Security, passport, driver’s licence, tax ID, or patient number)

Contact information (street address, email address, or phone number)

Device identifiers (IP or MAC address)

Biometric data (photos, fingerprints, facial geometry, retina scans, or voice signatures)

Personal or property records (vehicle registration, title numbers)

Information linked to identity, such as date of birth, race, religion, employment, education, health, or finances.

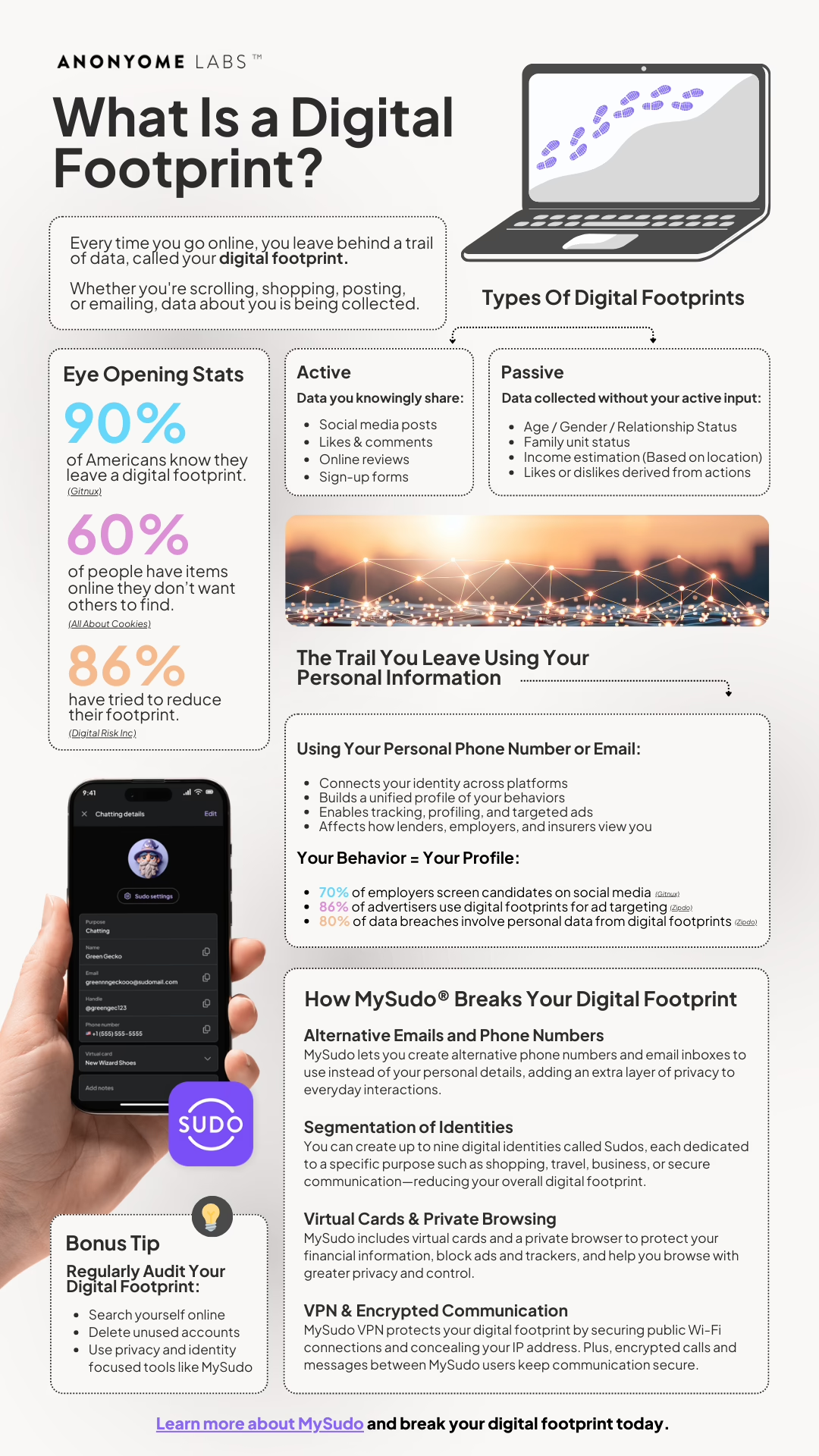

Personal data makes up a lot of what we call a person’s digital footprint or digital exhaust. It is also the “currency” of the data economy and drives personalized or targeted advertising online.

Every time we go online, we leave behind small pieces of personal information, often without realizing it. We share names, email addresses, phone numbers, and browsing habits when we sign up for “free” services like social media, email platforms, or shopping sites. But these services aren’t truly free: our data is the price of access.

Companies collect and use our information to personalize ads, improve targeting, and sell insights to other businesses. Even simple actions like liking a post, accepting cookies, or using a loyalty card add to the growing digital trail that reveals who we are, what we like, and how we live. Even banks collect and sell customer data.

Specifically:

Targeted advertising and marketing – Personal data allows websites and platforms to show highly targeted ads based on users’ demographics, interests, behaviours, and locations. Advertisers pay a premium for precision targeting, which increases conversion rates.

Produce and service personalization – Streaming services and other companies use personal data to tailor experiences, recommendations, and content.

Analytics and business intelligence – Data about users’ actions and preferences helps companies understand markets, forecast demand, and improve products.

Retailers analyse customer data to plan inventory and pricing, and apps track user behaviour to enhance design and usability.

Innovation and AI development – Artificial intelligence depends on large volumes of personal data for training models that recognise speech, faces, patterns, and preferences.

Platform ecosystems and network effects – Tech giants like Google, Meta, Amazon, and Apple have built ecosystems where personal data flows between services.

Data as a commodity – Data brokers (also called information brokers or data aggregators) are companies that collect personal information from a variety of sources, analyze it, and sell or share it with other organizations. Data brokers typically do not have a direct relationship with the people whose data they handle. Instead, they gather and infer data from public records, online activity, loyalty programs, social media, and commercial sources. Data broking is big business: the unregulated industry has expanded from $303.11 billion in 2024 to $332.89 billion in 2025 and is forecast to reach $479.73 billion by 2029.

Government and public sector efficiency – Governments also rely on personal data for taxation, public health, planning, and service delivery.

The famous saying “If you’re not paying for the product, you are the product” describes the way many digital services make money from users rather than charging us directly.

Checkout our infographic on what your digital footprint is. Learn about what information is compiled and tracked and how you can protect yourself.

While personal data is valuable for businesses, it also attracts cybercriminals.

Large stores of user data are highly attractive to bad actors, who can use stolen or breached financial and personal information for identity theft, financial fraud, or phishing attacks and other crimes. In 2024, global losses from payment fraud alone exceeded USD 1 trillion.

Other common risks of personal data exposure include:

Phishing and social engineering scams

Reputational harm

Discrimination or harassment

Employment or insurance fraud.

Our personal data is fueling the AI revolution. In fact, AI is the ultimate data broker. Without our consent, companies are scraping our personal information from across the internet and using it to train AI systems—and that’s just one way AI threatens personal privacy. Academics have found 12 other risks:

AI collects massive amounts of data from everywhere, increasing risks of surveillance. AI can harvest phone numbers, emails, and personal information and images from websites, social media, and public records.

AI automatically links identity information across various data sources, increasing risk of personal identity exposure. Using pattern recognition, AI can match and correlate scraped data from scattered sources and readily knit it together into a clear profile of a person.

When AI combines data about a person, it makes inferences from it, boosting the risks of privacy invasion.

AI infers personality or social attributes from physical characteristics, potentially leading to bias and discrimination.

AI repurposes data beyond its original intended use, further eroding user control.

AI has opaque data practices which fail to inform and give users control over how their data is used.

AI storage practices and data requirements risk data leaks and improper access.

AI can reveal sensitive information, such as through generative AI techniques.

AI’s ability to generate realistic but fake content makes it easier to spread false or misleading information.

AI can cause improper sharing of data when it infers additional sensitive information from raw data.

AI makes sensitive information more accessible to a wider audience than intended.

AI technologies invade personal space or solitude, often through surveillance measures.

One rapidly growing negative impact of AI data gathering is targeted scams and phishing. AI makes fraud more convincing by using readily available personal data to tailor attacks. AI-generated voice deepfakes, and deepfake texts, emails and web sites promoting fake products, deals and giveaways are part of the highest reported form of scam, according to the FTC.

In December 2024, the European Data Protection Board advised national regulators to allow personal data to be used for AI training, so long as the final product doesn’t reveal personal information. Regulating AI is an evolving area.

In the EU, the GDPR gives individuals broad rights over their personal data, including the ability to access, correct, delete, and transfer it. It also requires organizations to collect only what’s necessary and to protect data through strict security and consent standards.

The United States does not have one comprehensive federal privacy law. Instead, it has a patchwork of state-level privacy laws, such as the California Consumer Privacy Act, as amended by the California Privacy Rights Act which grants residents rights to access, delete, and opt out of the sale of their personal information. Other states, including Virginia, Colorado, and Utah, have introduced similar laws.

Other regions have their own data privacy laws, which all generally align with international privacy principles of consent, transparency, and security.

Fast-moving privacy-enhancing technology (PET) developments are trying to balance data use with privacy protection and regulatory compliance. Some examples of emerging technologies are:

Data anonymization – the process of removing certain pieces of private information in data collections that could be used to identify a person, to protect customer privacy while still using the data

Differential privacy – a statistical technique that adds controlled “noise” to datasets so individual records cannot be identified, while still allowing meaningful insights from aggregated data

Privacy-preserving identity and access management (IAM) – decentralized or token-based verification systems (such as self-sovereign identity and zero-knowledge proofs) which authenticate users without exposing unnecessary personal data. Examples include digital ID wallets and verifiable credentials, which give individuals more control over what identity information they share.

You can take practical steps to reduce your exposure and protect your personal information online:

Use strong, unique passwords and a password manager.

Enable two-factor authentication (2FA).

Review privacy settings on social media and apps.

Avoid oversharing personal information publicly.

Avoid sensitive transactions on public Wi-Fi. Use a truly private VPN like MySudo VPN.

Monitor financial accounts and credit reports.

Use encrypted messaging and privacy-focused browsers such as those in the MySudo privacy app.

Regularly clear cookies and browsing history.

Check out the full range of privacy tools in MySudo suite.

Personal Data

From our blog:

What constitutes personally identifiable information or PII?

14 real-life examples of personal data you want to keep private

The top 10 ways bad actors use your stolen personal information

What should I do if I’ve been caught in a data breach?

How MySudo keeps you safe on social media even in a data breach