Artificial intelligence (AI) is writing code, diagnosing cancer, approving mortgages, generating art, driving cars, and deciding what billions of people see on social media. It’s being called the most transformative technology since electricity. Governments are rushing to regulate it, companies are betting trillions on it, and yet most people still don’t really know what it is, so here we go.

AI is basically computer systems that can do human things, like understand language, recognize patterns, make decisions, solve problems, and learn from experience.

AI is already in most of our everyday tools like voice assistants, maps and navigation apps, email spam filters, streaming services, online shopping, and bank fraud detection. Many businesses use AI to automate routine tasks and improve customer service.

There’s no official definition of AI. The United Nations calls AI “self-learning, adaptive systems.”

The most common technical definition is this one from the OECD: An AI system is a machine-based system that, for explicit or implicit objectives, infers, from the input it receives, how to generate outputs such as predictions, content, recommendations, or decisions that can influence physical or virtual environments. Different AI systems vary in their levels of autonomy and adaptiveness after deployment.

AI kicked off in 1956 when computer scientists first questioned whether machines could think, but it wasn’t until the 2010s when it evolved into the transformative technology we know today. This short video runs through the history of AI:

Source: IBM

AI works by learning patterns from data and using those patterns to make decisions, predictions, or generate content:

Basically, AI works by learning from experience, similar to how humans learn from examples but at much larger scale and speed.

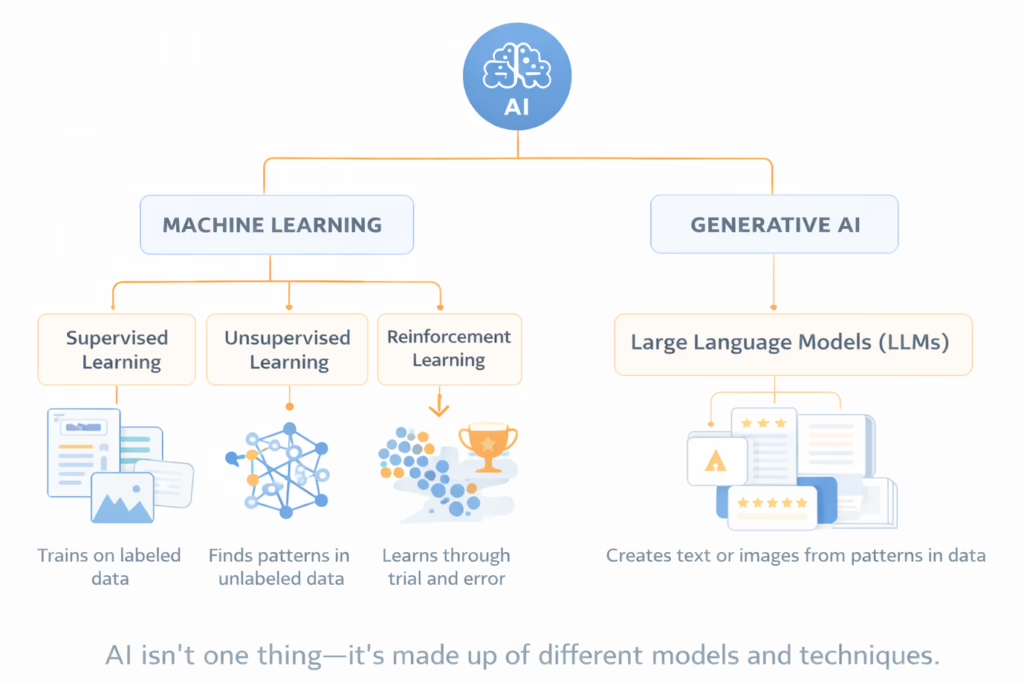

AI isn’t a single technology but a collection of techniques and systems:

All the AI we interact with today is narrow AI (also called weak AI) which are systems designed to perform specific tasks (e.g. filtering out junk emails). General AI or artificial general intelligence (AGI), on the other hand, is AI that can understand, learn, and apply intelligence to any task a human can. AGI doesn’t exist yet and may be decades away, if it’s even possible.

Machine learning is the technology powering most modern AI. Instead of programming explicit rules, ML systems learn from examples. There are several types of machine learning:

LLMs are a type of machine learning model designed to work with language. LLMs are trained on massive amounts of text so they can understand patterns in words, sentences, and meaning. This allows them to generate text, answer questions, summarize information, translate languages, and hold conversations.

LLMs don’t “think” or “understand” in a human sense. They predict the most likely next word based on patterns in their training data. That makes them powerful for language tasks, but also means they can make mistakes, sound confident when they’re wrong, or reflect biases in the data they were trained on.

Deep learning is a specialized type of machine learning that uses neural networks which are systems loosely modeled on how the human brain works, with layers of interconnected nodes that process information.

These neural networks can have many layers (hence the “deep” in “deep learning”), allowing them to learn extremely complex patterns. Early layers might detect simple features like edges in an image, middle layers recognize parts like eyes or wheels, and final layers identify whole objects like faces or cars.

Deep learning is behind most of the impressive AI breakthroughs such as facial recognition, language translation, voice assistants, self-driving cars, and medical imaging analysis.

The newest AI category is generative AI which are systems that create new content rather than just analyzing or categorizing existing content. These tools can generate text, images, music, video, and code.

ChatGPT, Perplexity AI, and Midjourney are all examples of generative AI. They work using LLMs or image generation models trained on massive datasets to understand patterns in human language or visual art, then use those patterns to create original outputs.

“Generative” doesn’t mean the AI is creative in the human sense. These systems are recombining and transforming patterns they learned from training data in statistically probable ways. They don’t understand meaning or have intentions but rather predict what should come next based on patterns.

AI is everywhere and spreading. See Google’s 1,001 real-world gen AI use cases from the world’s leading organizations. AI is in just about every industry; for example:

AI is also in your smartphone, virtual assistants, streaming services, social media feeds, email and messaging apps, online shopping sites, navigation apps, wearable tech, smart home devices, and more.

There are many advantages of AI, including:

Efficiency and productivity: AI systems can process vast amounts of information quickly, reducing time spent on repetitive or administrative tasks. This can free people to focus on creative, strategic, or interpersonal work.

Better decisions: When designed and used carefully, AI can support better decisions by identifying patterns humans might miss, particularly in complex or data-rich environments such as healthcare or climate modelling.

Innovation and discovery: AI accelerates research by analysing large datasets, simulating scenarios, and generating hypotheses. It is increasingly used in drug discovery, materials science, and scientific modelling.

Accessibility and inclusion: AI-powered tools can improve accessibility, such as speech-to-text systems, language translation, and assistive technologies for people with a disability.

Economic growth: AI has the potential to drive new industries, create new roles, and increase overall economic output.

But with these benefits come serious risks, such as:

Bias and discrimination: AI systems trained on historical data can reproduce and reinforce existing social biases. This can lead to discriminatory outcomes in areas such as hiring, lending, policing, and access to services.

Loss of transparency: Many AI models operate as “black boxes,” making it difficult to understand how decisions are reached. This undermines trust, accountability, and the ability to challenge unfair outcomes. There’s a huge push for trustworthy AI to address this and other problems with AI.

Loss of privacy and increased surveillance: AI relies on large-scale data collection and analysis and inferences from sensitive personal information. Without safeguards, this can lead to intrusive surveillance and loss of privacy.

Automation bias and overreliance: People may defer too readily to AI recommendations, even when those systems are wrong. This risk increases when systems appear authoritative or objective.

Misinformation and manipulation: Generative AI makes it easier to produce convincing fake content at scale, including deepfakes, impersonation, and targeted disinformation campaigns.

Economic disruption: AI will demolish some jobs and create others. The transition may be uneven, affecting some workers and industries more than others and increasing inequality.

Safety and security risks: AI systems can be hacked, manipulated, or repurposed for harm, including cybercrime, fraud, and automated attacks.

Current AI has some big limitations. One major issue is hallucination, which is when AI generates information that sounds plausible but is false. LLMs might confidently cite non-existent research papers or attribute quotes to the wrong people because they predict what sounds right, not what is right. They have no concept of truth versus falsehood, only probability.

AI also lacks common sense, real-world understanding, and genuine reasoning. It can’t transfer knowledge from one domain to another the way humans naturally do. An AI might excel at medical diagnosis but can’t apply that expertise to veterinary medicine without complete retraining.

AI also requires enormous amounts of data and computing power, making cutting-edge AI development expensive and resource intensive. There are also growing concerns about the environmental impact of training and running large AI models.

Ethically and socially, AI raises other big questions:

These questions have led to the development of ethical, responsible, and trustworthy AI frameworks that emphasize human oversight, fairness, transparency, and accountability. Learn more about trustworthy AI.

Latest research says Americans are open to AI but are concerned about its impact on humans. Similarly, people in 25 other countries worldwide are more concerned than excited about AI’s growing presence in daily life.

AI governance and regulation is advancing worldwide. Examples include:

We don’t really know what the next 10 years hold for AI. The OECD has explored 4 possible trajectories of AI out to 2030, looking at how AI might ramp up or slow down this decade.

Some likely developments to watch include:

What Is Artificial Intelligence